AI, Neuroplasticity, and the Recurring Fear of Cognitive Decline

Why concerns about intelligence resurface with every new tool, and what neuroscience actually says about how our brains adapt

Why Each New Cognitive Tool Triggers Fears of Intellectual Decline

Remember when Socrates thought writing would make everyone dumb? It appears he was the first luddite.

In Plato’s dialogue Phaedrus, written around 370 BCE, Socrates worries that writing will weaken memory and produce the appearance of wisdom rather than the real thing. In the story, he recounts a myth in which the god Theuth proudly presents writing to King Thamus of Egypt, claiming it will improve memory and intelligence. Thamus is not impressed. He responds that writing will instead implant forgetfulness in people’s souls, because they will rely on external marks rather than exercising their own memory. According to Thamus, writing would make people seem knowledgeable while actually leaving them ignorant and, worse, confidently so.

In other words, Socrates believed that relying on external tools for memory would weaken internal recall and create the illusion of understanding without the discipline of thinking.

Sound familiar?

There is a familiar refrain circulating again, louder this time because AI is involved.

“AI is making us stupid.”

“People can’t think anymore.”

“We are outsourcing our brains.”

These claims appear daily across social media, opinion pieces, conference panels, and LinkedIn posts. They are often delivered with confidence, rarely with precision, and almost never with a clear definition of what “intelligence” is supposed to mean in the first place.

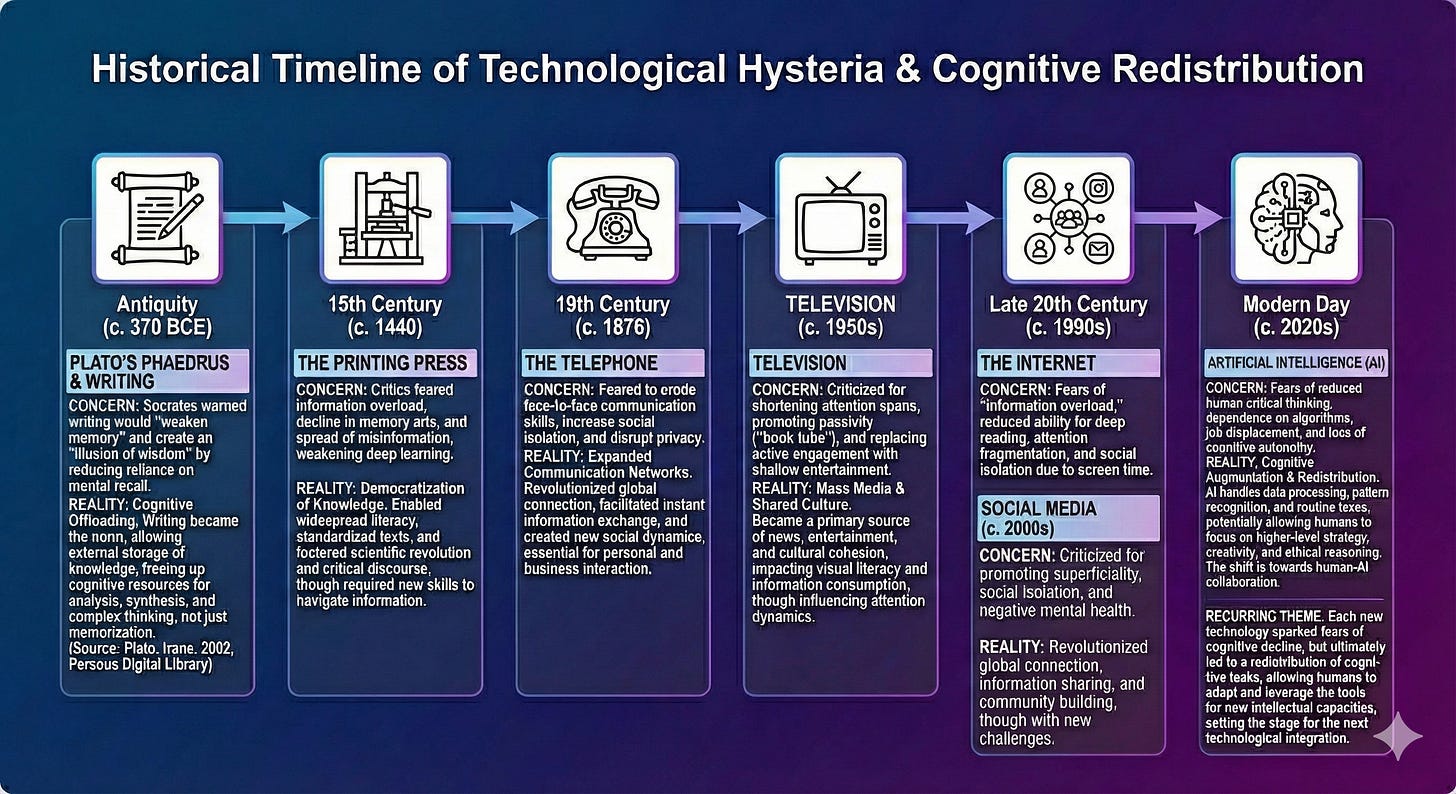

What makes this moment interesting is not that the fear exists, but that it follows a predictable historical pattern. Similar anxieties accompanied the printing press, calculators, spellcheck, search engines, and smartphones.

Before asking whether AI is dumbing us down, it is worth stepping back and examining what the research actually shows, what it does not show, and how human cognition really adapts to new tools.

The Research Gap Between Headlines and Reality

Many recent online conversations reference “MIT research” as proof that AI degrades thinking. These claims often circulate without citations, or with loose references. In the spirit of being unbiased (as I was advised to do earlier today by a kind gentleman) this article is listed here for you to peruse:

MIT study (popular press coverage, not peer-reviewed):

Why this study is frequently overstated or misinterpreted

The study has not been published in a peer-reviewed journal, limiting confidence in its methodology, analysis, and conclusions.

The participant pool is small and not demographically representative, making it inappropriate to generalize findings to broader populations.

Tasks used in the study are narrow and artificial, focusing on short-term task performance rather than longitudinal cognitive development.

The study design does not adequately distinguish between short-term performance effects and long-term neuroplastic changes.

Measures of “critical thinking” are task-specific proxies rather than validated, comprehensive cognitive assessments.

The study does not control for prior domain expertise, baseline cognitive skill, or familiarity with AI tools.

AI use was treated as a binary condition rather than examining different usage patterns, such as active interrogation versus passive acceptance.

The findings conflate reduced task effort with reduced cognitive capability, which are not equivalent in cognitive science.

***Tip: Always check your research sources, where articles come from, who paid for the research in the first place. Not everything is always as it seems.***

What does exist are studies on automation bias, cognitive offloading, and human trust in intelligent systems. These are not new. They predate modern generative AI by decades.

The problem with much of the current commentary is not that it raises concerns, but that it collapses multiple cognitive phenomena into a single moral conclusion.

Across cognitive science, human factors, and learning research, a consistent pattern emerges:

Automation bias, not cognitive decline. Studies show that people may over-trust intelligent systems and miss errors under pressure, not because reasoning capacity is reduced, but because responsibility and vigilance shift when authority is delegated to machines (Parasuraman & Riley, 1997; Mosier et al., 1997).

Memory reallocation, not memory loss. When information is reliably accessible externally, humans adapt by remembering how to retrieve knowledge rather than encoding every detail internally. This reflects strategic cognitive offloading, not degraded memory function (Sparrow, Liu, & Wegner, 2011).

Use-dependent skill weakening. Research on GPS and navigation shows that spatial memory weakens only for skills that are no longer practiced. Overall cognitive capacity remains intact and can be re-strengthened when those skills are re-engaged (Ishikawa et al., 2008).

Attention reshaping, not intelligence erosion. Studies on media multitasking find structural brain differences associated with attentional allocation and control, not generalized reductions in intelligence or learning capacity (Loh & Kanai, 2016).

Design determines outcomes. Learning science distinguishes sharply between passive reliance on technology and active partnership with it. Cognition is strengthened when tools support reasoning and feedback rather than replace effortful thinking (Salomon, Perkins, & Globerson, 1991).

Taken together, this body of peer-reviewed evidence points to adaptation, redistribution, and design-dependent outcomes, not cognitive erosion.

Neuroplasticity, Not Cognitive Decay

The human brain is not a static container of intelligence. It is a plastic system shaped continuously by experience, environment, and repeated behavior.

Neuroplasticity refers to the brain’s ability to reorganize neural pathways based on use, a well-established finding across cognitive neuroscience and learning science (Wolf, 2018). Functions that are exercised frequently become more efficient. Functions that are rarely used become less accessible. This is not damage. It is metabolic efficiency.

This matters because intelligence is not a single skill. It is a bundle of capabilities, including memory, reasoning, pattern recognition, abstraction, and judgment. Tools influence which of these are emphasized.

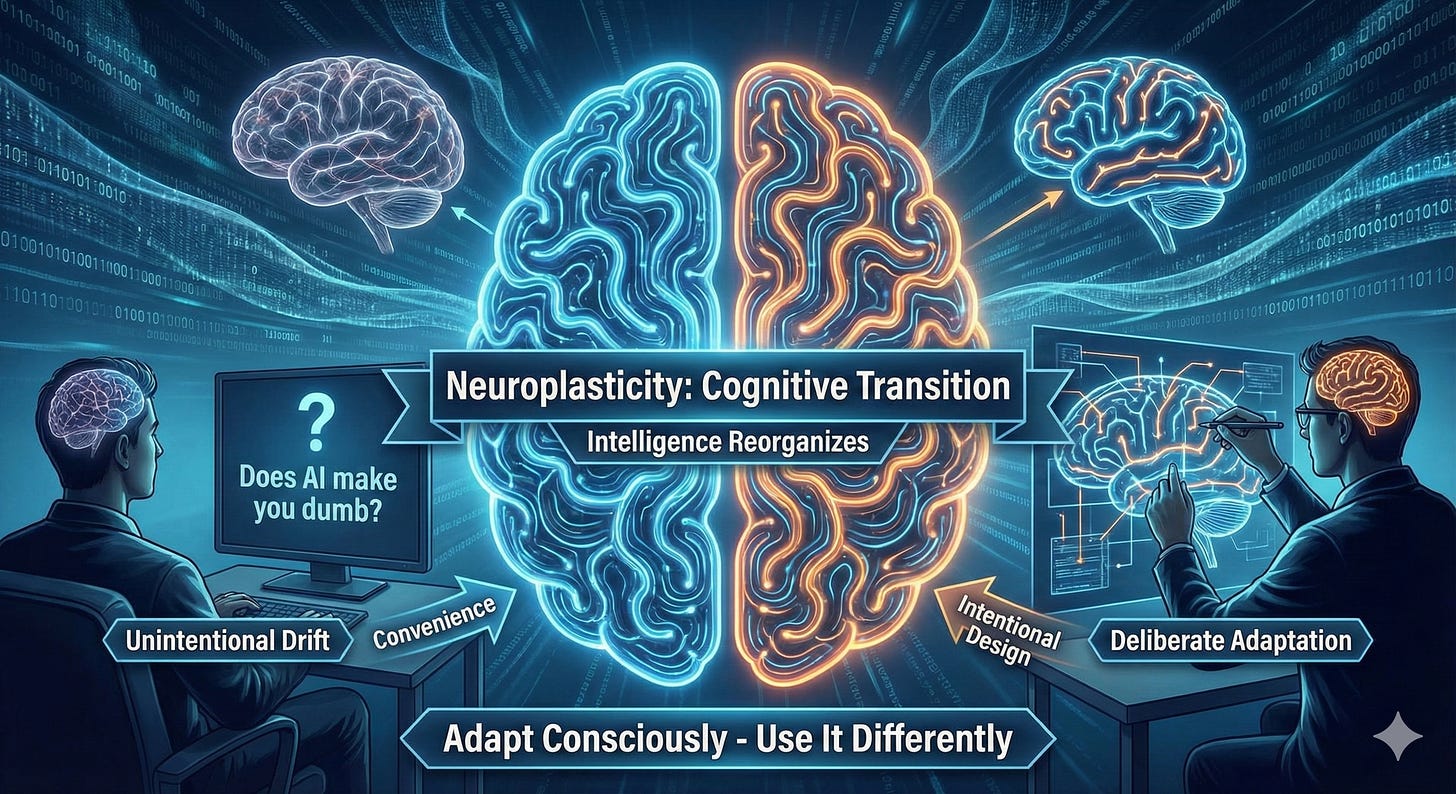

When AI reduces the effort required for certain tasks, the brain does not stop adapting. It adapts toward different cognitive workloads. The question becomes which skills are being practiced less, and whether that reduction is intentional or accidental.

Neuroplasticity does not imply inevitable decline. It implies responsibility.

Memory Is Not Disappearing. It Is Being Reweighted

One of the most common claims is that AI is destroying our ability to memorize. This claim misunderstands how memory works.

Memory has never been about storing everything internally. Even in pre-digital societies, memory relied on external scaffolding, from written language to social transmission. What changes with tools is not whether we remember, but what we remember.

Research consistently shows that humans adapt memory strategies based on environmental affordances, a pattern also consistent with extended cognition theory, which argues that tools can become integrated parts of human thinking rather than competitors to it (Clark & Chalmers, 1998). When external storage is reliable, people prioritize conceptual understanding, relational memory, and retrieval strategies over rote memorization.

This does not mean memorization is gone or impossible. It means it is no longer the default survival strategy.

Importantly, studies on learning and transfer show that people can still memorize effectively when they choose to, particularly when learning involves active engagement and cognitive effort (Kirschner, Sweller, & Clark, 2006). The brain does not lose the ability. It reallocates effort based on perceived utility.

If memorization matters for a task, such as expertise development, language fluency, or complex problem solving, it can still be trained. Neuroplasticity cuts both ways.

The Real Risk Is Passive Cognition

Where the concern becomes legitimate is not in tool use itself, but in passive use.

Cognitive research is clear on this point (Kirschner, Sweller, & Clark, 2006). Learning and skill retention depend on active engagement, feedback, and effortful processing. When users accept outputs without interrogation, correction, or synthesis, learning is shallow.

AI increases the temptation to skip those steps because it produces fluent, confident language. This creates the illusion of understanding without the work of sensemaking.

That is not a failure of intelligence. It is a failure of cognitive design.

The difference between cognitive amplification and cognitive erosion lies in whether the human remains actively involved in reasoning, evaluation, and decision-making.

Why This Moment Feels Different

AI feels different because it touches language, reasoning, and creativity, domains we associate with intelligence itself. But the underlying mechanism is familiar.

Every major cognitive tool has forced a redefinition of what it means to be skilled. Mental arithmetic declined when calculators arrived. Strategic reasoning became more important. Navigation strategies changed with GPS. Spatial memory declined in some contexts, while planning efficiency improved.

We are now seeing the same shift with language and synthesis.

Low-level generation becomes cheaper. Higher-level judgment becomes more valuable.

This is not dumbing down. It is sorting.

What Cognitive Strength Looks Like Now

In this environment, cognitive strength is less about raw recall and more about orchestration.

It shows up as:

The ability to evaluate outputs rather than accept them

The capacity to ask better questions, not just get faster answers

The skill of integrating multiple sources into coherent judgment

Awareness of one’s own cognitive dependencies

These are not skills AI removes. They are skills AI exposes.

Strengths and Weaknesses: AI Use Through a Neuroplasticity Lens

Seen through a neuroplasticity lens, AI is neither purely beneficial nor inherently harmful. It exerts directional pressure on cognition. What matters is where that pressure is applied and sustained.

Strengths and cognitive upsides

Reduces cognitive load on routine and mechanical tasks, freeing mental capacity for higher-order reasoning and synthesis

Accelerates pattern recognition by surfacing connections across large information sets that would otherwise be inaccessible

Supports learning by providing immediate feedback, alternative explanations, and multiple representations of the same concept

Encourages metacognitive awareness when users actively interrogate outputs and compare reasoning paths

Enables rapid iteration, which can strengthen judgment when humans remain responsible for evaluation and decision-making

Weaknesses and cognitive risks

Promotes shallow processing when outputs are accepted passively without verification or reflection

Reduces practice in recall, sequential reasoning, and foundational skill-building if those steps are consistently bypassed

Increases automation bias, particularly under time pressure or when AI outputs appear fluent and authoritative

Weakens error detection when users disengage from sensemaking and treat AI as an answer engine rather than a hypothesis generator

Can distort self-assessment of understanding, creating confidence without corresponding depth

From a neuroplastic perspective, these effects are not permanent traits. They are usage patterns. The brain adapts to what it repeatedly does. AI strengthens cognition where it is used as a partner in thinking and thins cognition where it replaces thinking altogether.

Choosing Adaptation Over Panic

The question “does AI make you dumb” is emotionally compelling but scientifically imprecise.

A more accurate question is whether we are designing our cognitive habits intentionally, or allowing convenience to do it for us.

Neuroplasticity ensures adaptation either way.

The real risk is not that we stop thinking. It is that we stop noticing how we think.

History suggests that intelligence does not disappear under technological pressure. It reorganizes.

The outcome depends on whether individuals and institutions recognize the shift and respond deliberately.

This is not a cognitive collapse. It is a cognitive transition.

And like every transition before it, the people who adapt consciously will not be the ones asking whether intelligence is gone. They will be too busy using it differently.

Bonus: How to Actively Expand Neuroplasticity in an AI-Rich World

Neuroplasticity is not something that happens to you. It is something you can deliberately influence. Research across neuroscience and learning science points to a small number of conditions that reliably support cognitive adaptation, even in environments saturated with automation and AI.

Practices that reliably support neuroplasticity

Effortful learning. Skills strengthen when learning requires active retrieval, error correction, and sustained attention. Passive consumption, even of high-quality information, produces weaker neural encoding.

Deliberate difficulty. Introducing friction, such as explaining concepts without AI assistance or solving problems before checking outputs, reinforces deeper learning.

Novelty and variation. Exposure to new domains, perspectives, and problem types encourages the formation of new neural connections rather than reinforcing narrow pathways.

Sleep and recovery. Memory consolidation and neural reorganization occur disproportionately during sleep. Cognitive performance gains are constrained without adequate recovery.

Physical movement. Aerobic exercise has strong links to neurogenesis, synaptic plasticity, and executive function.

Try going on a nice hike to the edge of Europe, for example.

Relevant researchers and accessible resources

Andrew Huberman. Stanford neuroscientist known for translating peer-reviewed neuroscience into practical protocols related to learning, focus, sleep, and neuroplasticity.

Michael Merzenich. Pioneer of neuroplasticity research whose work demonstrated that adult brains retain significant capacity for reorganization through targeted training.

Maryanne Wolf. Cognitive neuroscientist whose research on reading, attention, and deep comprehension highlights how digital environments reshape neural pathways.

Used intentionally, AI does not reduce neuroplasticity. It changes the terrain on which plasticity operates. The question is whether we allow that terrain to be shaped entirely by convenience, or whether we introduce the conditions that keep adaptation active, flexible, and durable.

References (APA)

Sparrow, B., Liu, J., & Wegner, D. M. (2011). Google effects on memory. Science, 333(6043), 776–778. https://doi.org/10.1126/science.1207745

Parasuraman, R., & Riley, V. (1997). Humans and automation. Human Factors, 39(2), 230–253. https://doi.org/10.1518/001872097778543886

Kirschner, P. A., Sweller, J., & Clark, R. E. (2006). Why minimal guidance during instruction does not work. Educational Psychologist, 41(2), 75–86. https://doi.org/10.1207/s15326985ep4102_1

Wolf, M. (2018). Reader, come home: The reading brain in a digital world. HarperCollins.

Clark, A., & Chalmers, D. (1998). The extended mind. Behavioral and Brain Sciences, 21(1), 7–19https://philpapers.org/rec/CLATEM

Frequently Asked Questions

Does AI actually make people less intelligent over time?

Current peer-reviewed research does not support the claim that AI reduces general intelligence or causes long-term cognitive decline. What studies consistently show is cognitive redistribution. When certain tasks are offloaded, the brain adapts by emphasizing different skills. The risk lies in passive reliance, not in tool use itself.

If AI handles writing, memory, or synthesis, will I lose those skills?

Skills weaken when they are not practiced, but they are not permanently lost. Neuroplasticity allows abilities like memory, reasoning, and writing to be retrained when they are intentionally exercised. AI changes which skills are practiced by default, not which skills are possible.

What is the most important habit to preserve thinking in an AI-rich environment?

Active engagement. Using AI as a collaborator rather than an answer engine preserves judgment, error detection, and learning transfer. The most consistent finding across cognitive science is that effortful thinking, not tool avoidance, is what sustains cognitive strength.

"the people who adapt consciously will not be the ones asking whether intelligence is gone. They will be too busy using it differently."

Love this line! 100% agree

I’m just starting to read through this and my first reaction upon reading is that a huge logical error often occurs when people try to debunk a popular opinion. It’s that proving that someone has a bad argument is not the same thing as proving that the observation itself is false. All skeptics accomplish in many cases is proving that the rationalisation was not strong. The problem is that people are discredited unless they cite a scientific paper but if a valid scientific paper does not exist for any number of reasons, then people will obviously cite an invalid paper and the skeptics will think they have won. But it’s just like in court where a person who can’t afford a lawyer will lose even if they are innocent. If billions of people witness something with their own eyes they will be discredited simply because a scientist has not confirmed it in a paper. And there are many reasons why some facts are not yet confirmed in papers