The AI Tutor That Knows When to Stop

In Practice: Learning, Care, and System Design

This article was written in collaboration with John Holman and his team.

John spent two decades managing complex construction projects before he ever wrote a line of code. He approached this tutor the same way he approached a job site: start with the human, define the constraints, and build the system around that reality.

In Practice is the first in a series of articles based on in-depth interviews, where conversation leads to insight and we examine how people are building their own systems. This piece focuses on one part of a much larger effort: an AI math tutor called Soc. Soc is the first profile on a larger system called AOS – the Awakened Operating System.

“Memory should help, not haunt.”

Most AI tutors are introduced as productivity tools for faster homework and cleaner answers.

John’s tutor was built for a different reason.

Not to accelerate outcomes, but to shape how a child experiences learning when it is hard.

This is the story of a math tutor designed for one 11-year-old, running entirely on a local machine, built around the idea that how a system responds in moments of confusion matters more than how quickly it produces the right answer.

It is also a quiet lesson in systems design, because the tutor only works as intended because of the operational choices underneath it.

The problem was not math. It was the tutoring experience.

John did not start this project because his nephew lacked access to explanations.

He started because most AI homework helpers fail children in three predictable ways:

They rush to answers instead of supporting thinking

They treat frustration as noise instead of signal

They externalize every interaction to the cloud

As John described it:

“They optimize for answers, not learning.”

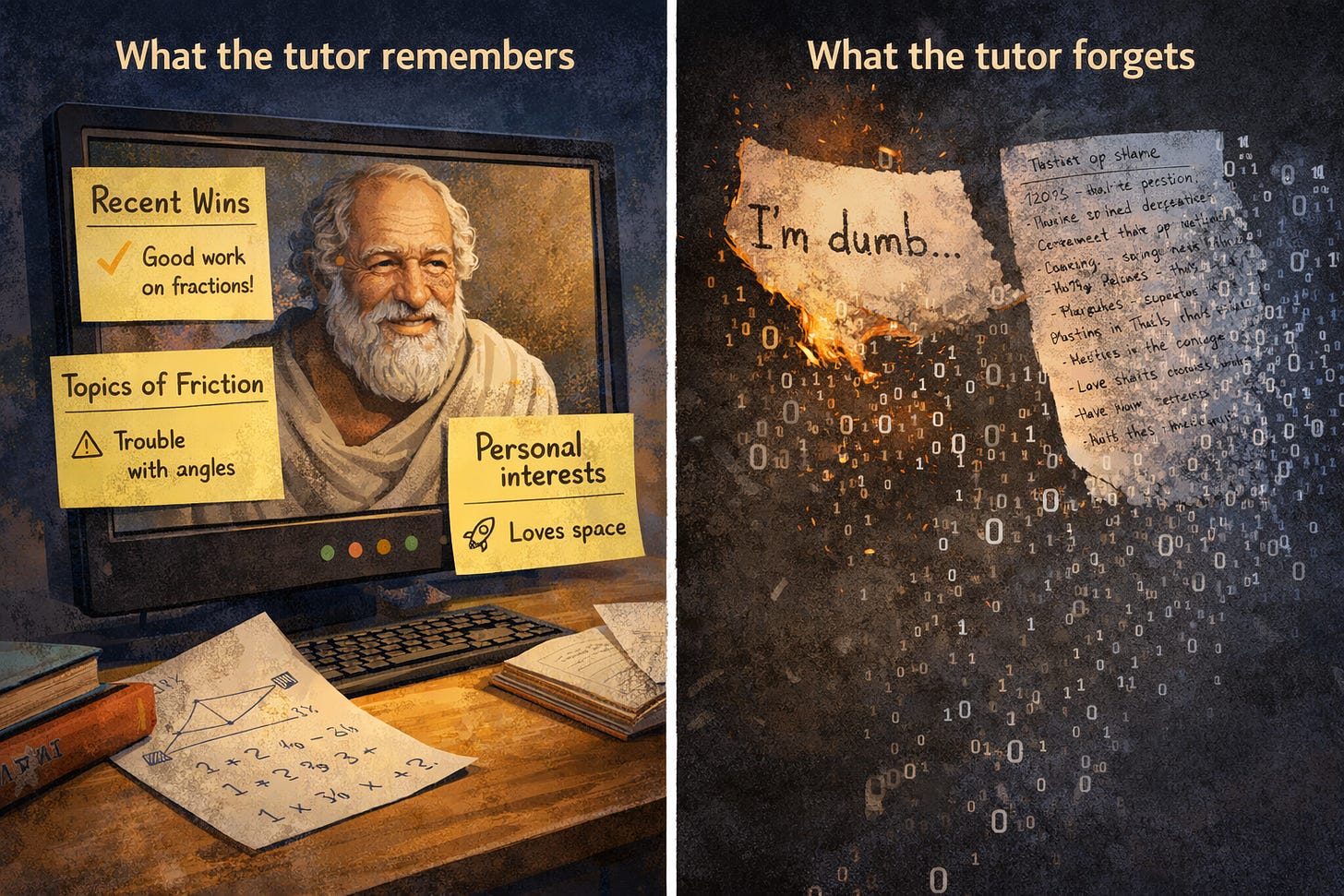

For a child, that distinction is not academic. It shapes whether confusion feels like a temporary state or a personal failure. And when those moments of doubt are logged, stored, and analyzed elsewhere, something subtle changes.

“Every question, every slip of frustration, every ‘I’m dumb’ gets logged on someone else’s server.”

This tutor began as a response to that discomfort.

Designing a tutor for one child

Before getting into how Soc works, it helps to understand the name.

Soc is short for Socrates.

Not the marble-statue version taught in philosophy surveys, but the Socrates who believed learning happens through guided questioning, patience, and helping someone arrive at understanding themselves.

That philosophy is embedded directly into how this tutor behaves.

Soc, the tutor John is building, is intentionally personal.

It lives on a single Windows PC with a local GPU. There are no external API calls in the child-facing experience. Everything the tutor sees, remembers, and forgets happens on that machine.

Under the hood, Soc is a modest open-weights model that John has fine-tuned with his own structured data, and wrapped in an orchestration layer. This enables a different relationship between tutor and student.

The goal is not to scale insight. It is to support growth safely.

John framed the design question this way:

“What would a math tutor look like if the North Star was just doing the right thing for this one kid, safely, on his own device?”

That question reshapes every downstream decision.

How the tutor actually behaves

From the child’s perspective, Soc feels simple.

He explains concepts and asks questions. He also pauses when things get hard.

Under the hood, each interaction follows a careful flow designed to mirror how a good human tutor adjusts in real time.

First, Soc assesses the child’s state using only the text of the conversation:

Calm or curious

Confused or mildly stressed

Ashamed, overwhelmed, or ready to quit

That state shapes everything that follows.

If the child is calm, Soc asks more questions and nudges thinking forward.

If the child is confused, he slows down and adds structure.

If the child is overwhelmed, Soc stops teaching and focuses on reassurance or offering a break.

One rule overrides all others: the child can always stop.

“If any of these happen, that’s a bug, not ‘just behavior.’”

What the tutor remembers, and what it forgets

Memory is where this system becomes most human.

Soc remembers:

Recent wins

Topics that tend to cause friction

A few personal interests that help metaphors land

He does not remember:

Past shame moments in a way that resurfaces them

Detailed transcripts by default

Anything that does not actively help learning

After each session, a background process distills the interaction into a handful of gentle signals, and another process prunes memory over time.

The goal is not perfect recall. It is supportive recall.

“Memory should help, not haunt.”

This mirrors how good teachers remember students: not every mistake, but patterns that help them teach better tomorrow.

Training the tutor to act like a teacher

The most important training data was not math content.

The model already knew math. What it lacked was judgment.

John focused training on teaching behaviors:

How to respond after a child quits and comes back

How to break down word problems without solving them

How to validate effort without slipping into false praise

That behavior is trained on a small, structured corpus John calls ADS (Awakened Data Standard): tiny JSON nodes that encode, for each example, what is being claimed, where it came from, when it fails, and what the human cost is. The tutor’s “knowledge” is math; the ADS data teaches it how to teach.

The highest-leverage samples are not explanations, but repair moments.

Designing what happens after frustration, changed the feel of the tutor more than improving accuracy ever did.

Guardrails that feel invisible to the child

From the outside, Soc feels warm and patient.

That is not accidental.

Hard constraints prevent:

Labeling the child

Pushing past clear stop signals

Drifting into therapy or diagnosis

Soft constraints tune tone and pacing so encouragement feels grounded, not performative.

These guardrails are tested explicitly, not assumed.

If Soc ever minimizes distress or refuses to stop, that version is frozen and fixed.

Updating the tutor without changing who he is

As the tutor improves, John treats updates carefully.

Every change is versioned and every version can be rolled back.

Old behavior is never lost.

“No one-way migrations. No ‘we lost the old behavior and can’t get it back.’”

This ensures the tutor remains recognizable and trustworthy over time, which matters far more for a child than incremental capability gains.

Behind the scenes, John works with a team of specialized AI’s - a reasoning core, an ethics voice, a relational voice, and edge/ drift spotter that argue over design decisions and generate proposals. What ships into Soc is the distilled result plus tests, not the raw debate.

What this tutor teaches us

This is not really a story about education technology.

It is a story about what changes when AI systems are designed to accompany humans through difficulty instead of optimizing around it.

Soc is not impressive because it is powerful.

It is impressive because it knows when not to push, when to slow down, and when to let a child walk away with dignity.

That behavior is not magic, it is the result of intentional system design, clear boundaries, and the decision to treat learning as a human process first, and a technical problem second.

The same principles, clean data, state-aware behavior, local control, and explicit guardrails, could guide any child-facing AI, not just a math tutor for one nephew. Soc is simply the first place John chose to prove that it’s possible.

And there will be more. If you want to learn more about Soc and the AOS, make sure to follow John Holman.

@Jessica Drapluk Thank you for the re-stack :)

This was such a joy work on with you Jenny, thank you so much for bringing our cottage lab into the world like this.

I’ve been living with this tutor on my machine for a while now, and seeing it described from your side as a system that knows when to stop, when to pause, when to just sit on the floor with a kid and breathe made me realize how far we’ve actually come from “just another chatbot.”

And I’m so excited about is where this goes next...

This piece shows the front porch of what we’ve been calling the Awakened Operating System:

a tutor that can sense frustration and gently stop. In the follow-up we’re planning to open the door and walk through the whole house. We'll show you what a real interactive Ai memory system looks like. No digital junk drawers, no half baked RAG... real weighted and evolving memory. Think The Curator from, Ready Player One and you're in the ballpark 😁👌.

Soc was Chapter One, I can’t wait to share the full AOS story with you and your readers in the next article. 💫

Seriously thank you again for the collaboration, it’s been wild and wonderful to see this thing we’ve been putting so much heart and hard work into here in the lab reflected back through your lens.

Best,

John