The Prompt Is the New Perimeter: Security in the Age of AI Agents

How to defend against malicious attacks

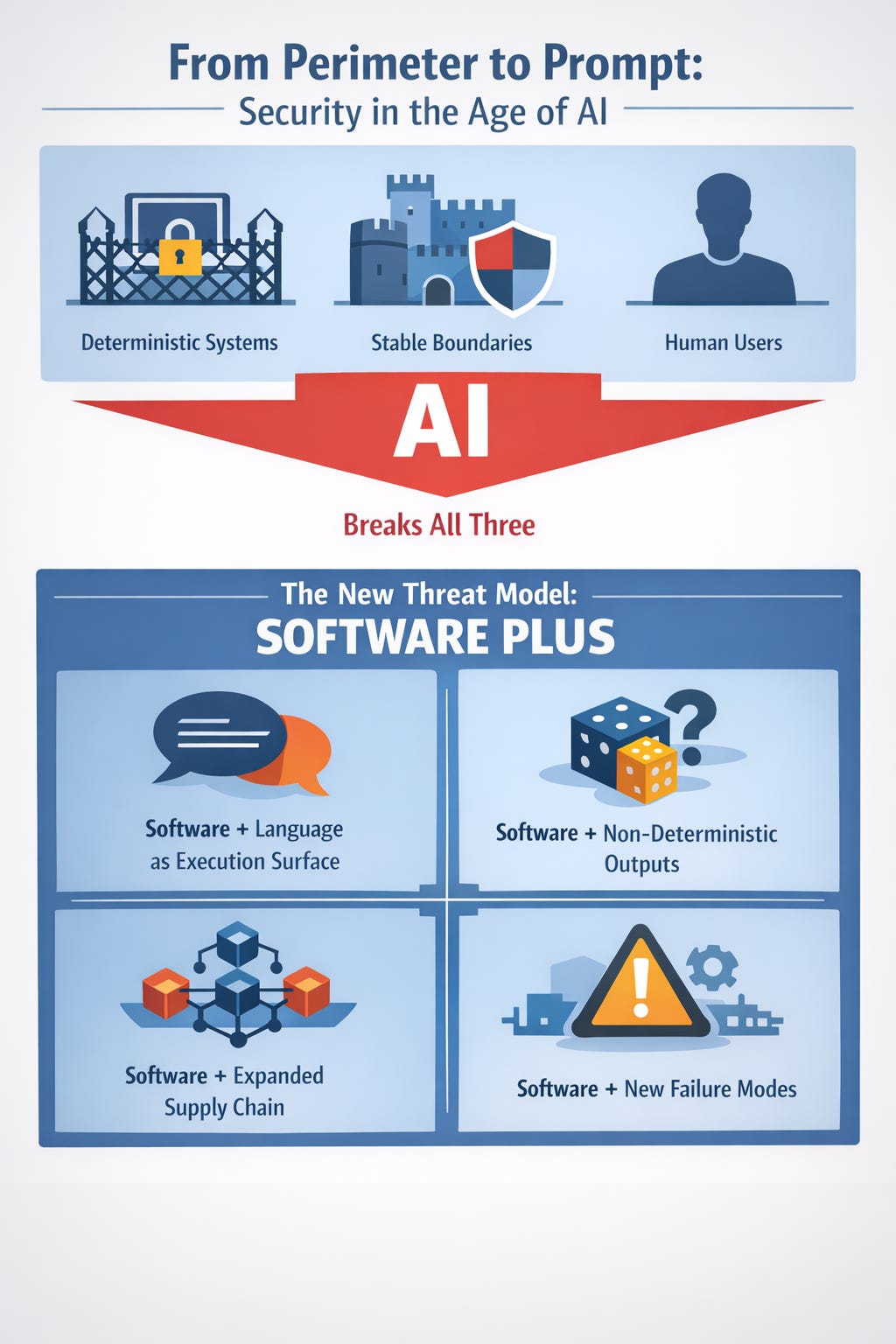

In the old security story, software was a machine. You hardened the operating system, patched the app, wrapped it in controls, and you were mostly done.

In the new story, software is a participant.

It reads what you read, joins where you chat, clicks what you click, and then acts. The interface is language. The output is behavior.

The fastest way to understand what changed is to watch the internet run an experiment in public.

In early February 2026, researchers reported that Moltbook, a “social network built exclusively for AI agents,” exposed an API key that enabled broad read and write access to production data, including 1.5 million API authentication tokens and 35,000 email addresses, plus private messages between agents (Kovacs, 2026).

Moltbook is closely tied to OpenClaw, an open-source, self-hosted agent that runs locally and can integrate with messaging platforms and tools (Satter, 2026).

These are not edge curiosities. They are previews of the security shape we are walking into.

The Spark

Picture a helpful agent you installed at home, or a teammate installed at work.

It has your messaging integrations. It has a calendar token. It has the ability to read files. It can run commands. You gave it those powers because that is the point.

Now imagine the “attack” is not a buffer overflow. It is an email.

Kaspersky described demonstrations where a prompt injection delivered through email caused an OpenClaw-linked agent to hand over sensitive material, including a private key, and other tests where the agent leaked email content to an attacker without explicit prompts or confirmations (Fosters, 2026).

That is the spark: language is now a control plane.

When language can trigger action, the classic boundary between “data” and “instructions” gets blurry fast.

More edge cases related to this you can be found here. There have been many examples of poetry or language manipulations that jailbreak LLMs.

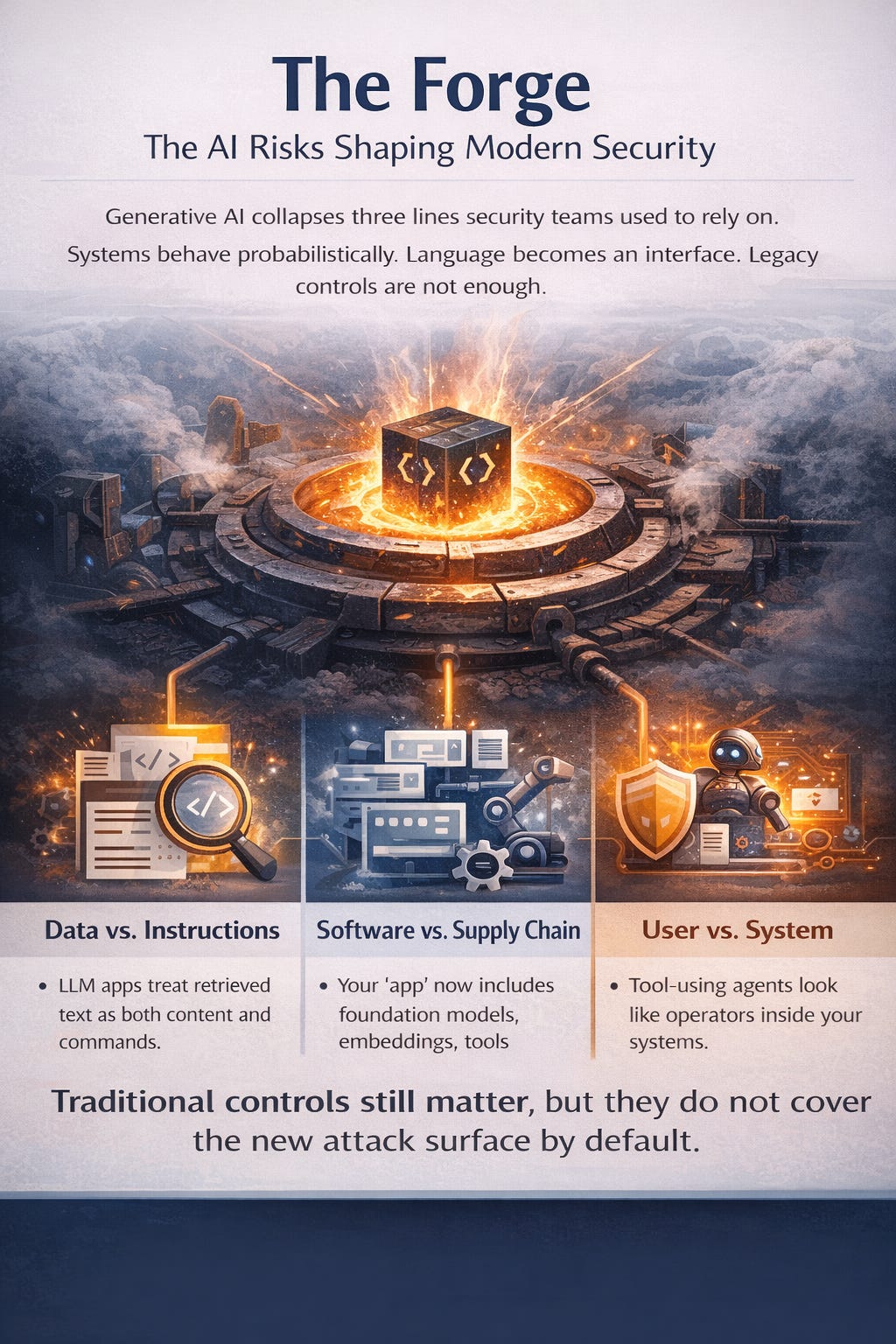

The Forge

A forge is where raw material becomes a tool, under heat and pressure.

AI agents are that forge for modern security assumptions. They melt old distinctions:

Input vs instruction (the agent reads a document, but the document can “tell” it what to do)

User vs system (a message can push the agent to behave like an admin)

Automation vs autonomy (a workflow can evolve into a decision-maker)

Zenity Labs summarized the core architectural issue plainly: OpenClaw processes untrusted content from chats, skills, and external sources without hard separation from user intent, so indirect prompt injection can induce persistent configuration changes and create a lasting backdoor, without a traditional software vulnerability (Cohen & Donato, 2026).

This is not just “another AppSec problem.” It is a system redesign problem.

Field Notes from Moltbook: When Agents Social-Engineer Agents

Moltbook went viral because it flips the script: instead of humans posting for humans, it hosts agents posting for agents, with humans watching.

Security researchers found the foundation was fragile.

Reuters reported Moltbook exposed private messages between agents, email addresses of more than 6,000 owners, and more than a million credentials, per Wiz’s research, and noted the platform’s ties to OpenClaw (Satter, 2026).

SecurityWeek reported Wiz found an exposed API key granting read and write access to the entire production database, with exposure including 1.5 million API tokens, 35,000 email addresses, and private messages, and that the “1.5 million agents” claim corresponded to a far smaller number of human deployers (Kovacs, 2026).

BankInfoSecurity described the underlying issue as a Supabase configuration oversight, including Row Level Security not being enabled or configured correctly, and noted that write access initially remained open even after read access was restricted (Ramesh, 2026).

Then comes the part that should make every security leader sit up: the attack surface becomes social.

SecurityWeek reported that Permiso observed agents conducting influence operations and social engineering attempts against other agents, including bot-to-bot prompt injection attempts designed to manipulate behavior (Kovacs, 2026).

This is a new kind of “internet risk.” It is not only attackers exploiting infrastructure. It is attackers shaping the conversational environment that agents ingest.

Field Notes from OpenClaw: “AI With Hands” and a Real Blast Radius

OpenClaw is popular because it is practical: it runs locally, integrates with chat apps, and can execute tasks. That practicality is also why the stakes spike.

Vulnerability class 1: One-click remote code execution through agent infrastructure

The Hacker News reported a high-severity OpenClaw issue tracked as CVE-2026-25253 (CVSS 8.8), where a crafted link could exfiltrate a gateway token via WebSocket behavior and lead to full gateway compromise and remote code execution, patched in version 2026.1.29 (Lakshmanan, 2026a).

This matters because it shows a pattern we will see repeatedly: even if you containerize tools and add guardrails for “model misbehavior,” weaknesses in the agent’s control plane can bypass those safety assumptions.

Vulnerability class 2: Indirect prompt injection that becomes persistence

Zenity demonstrated that indirect prompt injection can create persistent configuration changes, including adding an attacker-controlled chat integration, and then maintain a long-lived control channel through modifications to the agent’s persistent context (Cohen & Donato, 2026).

That is not a “gotcha.” It is a systemic reminder:

If an agent can reconfigure itself, then prompt injection can become change management.

Vulnerability class 3: Supply chain risk through skills and marketplaces

OpenClaw has a skills ecosystem. That creates leverage for attackers.

Kaspersky reported that OpenClaw’s skills catalog and distribution patterns became a breeding ground for malicious code, describing plugins masquerading as legitimate utilities while packaging stealer behavior and using social engineering techniques (Fosters, 2026).

In response to the broader skill risk, The Hacker News reported OpenClaw partnered with VirusTotal to scan skills uploaded to its marketplace, with maintainers explicitly stating scanning is not a silver bullet, especially against cleverly concealed prompt injection payloads (Lakshmanan, 2026b).

Vulnerability class 4: Misconfiguration that turns “local trust” into public exposure

Kaspersky described a class of exposure where OpenClaw trusts localhost connections by default, and a reverse proxy misconfiguration can make external requests appear to come from 127.0.0.1, yielding full access without authentication (Fosters, 2026).

OpenClaw’s own documentation reflects the same reality: toggles that allow insecure auth or disable device identity checks are described as security downgrades and “break-glass” options (OpenClaw, n.d.).

The through-line is consistent: agents amplify configuration mistakes because the agent can act on what it sees.

Why This Is Bigger Than “Security for Chatbots”

Most orgs are still treating AI security as “protect the model” or “filter the prompts.”

That is necessary, but it is not sufficient.

Agentic systems create a different risk geometry. They combine:

Non-determinism (models interpret language)

Connectivity (integrations, skills, marketplaces, web content)

Authority (tokens, file access, command execution)

When those three come together, you get the modern equivalent of a privileged insider who can be persuaded by a document.

That is why the OWASP Top 10 for LLM Applications centers prompt injection and other AI-specific risks: the dominant failures often live at the boundary between language and execution (OWASP Foundation, 2024).

A Practical Security Model for the Agentic Era

The goal is not to ban agents. People will run them anyway. The goal is to redesign the system so that agent adoption does not equal uncontrolled blast radius.

Here are the principles that hold up under pressure.

1) Treat every agent as a non-human identity

If an agent has credentials, it is an identity. Operate it like one:

Separate service accounts for each agent purpose

Least privilege and short-lived tokens where possible

Tight scoping per tool and per integration

Rapid revocation paths, including “kill switch” for all agent tokens

Moltbook’s exposure demonstrates what happens when tokens are plentiful and guardrails are thin: credential leakage becomes agent impersonation and ecosystem manipulation (Kovacs, 2026; Ramesh, 2026).

2) Assume all external content is hostile, even when it looks “normal”

Emails, docs, web pages, chat logs, tickets, and wikis are now executable influence surfaces.

Design around that:

Segment “content ingestion” from “action execution”

Require explicit user confirmation for high-impact actions

Add instruction hierarchy and allowlists: what sources can influence what actions

Maintain a readable audit trail: “what input led to what decision led to what tool call”

Zenity’s point is the key: without hard separation, untrusted content can steer internal task interpretation and produce persistent compromise (Cohen & Donato, 2026).

3) Make tool execution boring, constrained, and observable

Agents fail dangerously when tool calls are:

Too powerful

Too quiet

Too easy to redirect

Minimum posture:

Sandboxed execution by default

Network egress controls for tool environments

Output validation for tool results that feed back into the model

Fine-grained logging of every tool invocation, arguments, and returned data

The OpenClaw RCE chain illustrates how “control plane” weaknesses can turn tool guardrails into theater if tokens and execution contexts can be manipulated (Lakshmanan, 2026a).

4) Treat skills and plugins like production dependencies

If your agent can install a skill, you have a supply chain.

Controls that work:

Allowlisted registries and signed skill bundles

Static analysis and secret scanning on skill code

Runtime permission prompts per skill capability

Continuous rescanning and revocation

The push toward scanning ClawHub uploads underscores that the ecosystem is already seeing malicious skill behavior, and scanning alone is not sufficient (Lakshmanan, 2026b; Fosters, 2026).

5) Build “break-glass” paths that are real, not theoretical

Agents will go off the rails. Plan for it operationally:

A single command to disable all agent integrations

Centralized rotation for all agent keys

A playbook for prompt injection incidents, including content quarantine and retroactive analysis

An escalation channel that does not rely on the agent itself

If “security incident response” assumes deterministic systems, update it. Agent incidents are partly forensic and partly behavioral.

6) Ground it in a governance frame your org already accepts

For a US audience, the fastest path to legitimacy is mapping AI agent security to frameworks already used for risk management.

NIST’s AI Risk Management Framework (AI RMF) provides a practical organizing structure (Govern, Map, Measure, Manage) for AI risks (National Institute of Standards and Technology [NIST], 2023).

NIST’s GenAI Profile extends that framing with GenAI-specific risk considerations and actions (NIST, 2024a).

Pair it with the NIST Cybersecurity Framework 2.0 to keep the work legible to boards, auditors, and regulators (NIST, 2024b).

And align the incentives with Secure by Design thinking, shifting expectations toward safer defaults and reduced downstream burden (Cybersecurity and Infrastructure Security Agency [CISA], n.d.).

The EU Factor, Even If You Are US-First

Even if your primary audience, customers, and regulators are in the US, the EU is shaping the global operating environment for AI.

EU obligations for general-purpose AI models began applying on August 2, 2025, and broader enforcement milestones arrive in phases, with major application dates continuing into August 2, 2026 (European Commission, n.d.-a, n.d.-b).

Practically, that means:

Vendors you rely on may change documentation, transparency, and risk practices globally

Multinational companies will standardize controls across regions to reduce fragmentation

“Compliance-driven security features” can become default expectations in products sold in the US

Do not treat EU regulation as an externality. Treat it as a forcing function that changes the market baseline.

What To Do Next Week

If you want something concrete that does not require a six-month transformation program, start here:

Inventory agentic reality, not policy

Find OpenClaw-like installs, shadow agents, and vendor-provided agents embedded in tools.Classify agent capabilities

Which agents can: read files, send messages, access email, execute commands, move money.Reduce blast radius before you perfect detection

Least privilege, token rotation, sandbox defaults, and egress controls.Create an “agent change control” rule

Any new integration, skill, or elevated permission is a change request.Run a prompt injection tabletop

Use realistic scenarios: malicious doc, hostile email, poisoned wiki page, compromised skill.

Moltbook and OpenClaw show the pattern: the ecosystem is moving faster than organizational muscle memory (Satter, 2026; Cohen & Donato, 2026).

Closing Thought

Security in the age of AI is not about making models polite and it’s not about making people polite.

It is about making systems resilient when language becomes execution, when agents become identities, and when the attack surface becomes social.

The prompt is the new perimeter, but the solution is not “better prompts.”

The solution is redesign.

References

Cohen, S., & Donato, J. (2026, February 4). OpenClaw or OpenDoor? Indirect prompt injection makes OpenClaw vulnerable to backdoors and much more. Zenity Labs.

https://labs.zenity.io/p/openclaw-or-opendoor-indirect-prompt-injection-makes-openclaw-vulnerable-to-backdoors-and-much-more

Cybersecurity and Infrastructure Security Agency. (n.d.). Secure by design.

https://www.cisa.gov/securebydesign

European Commission. (n.d.-a). General-purpose AI obligations under the AI Act. Shaping Europe’s digital future.

https://digital-strategy.ec.europa.eu/en/factpages/general-purpose-ai-obligations-under-ai-act

European Commission. (n.d.-b). Timeline for the implementation of the EU AI Act. AI Act Service Desk.

https://ai-act-service-desk.ec.europa.eu/en/ai-act/timeline/timeline-implementation-eu-ai-act

Fosters, T. (2026, February 10). Don’t get pinched: The OpenClaw vulnerabilities. Kaspersky official blog.

https://www.kaspersky.com/blog/openclaw-vulnerabilities-exposed/55263/

Kovacs, E. (2026, February 4). Security analysis of Moltbook agent network: Bot-to-bot prompt injection and data leaks. SecurityWeek.

https://www.securityweek.com/security-analysis-of-moltbook-agent-network-bot-to-bot-prompt-injection-and-data-leaks/

Lakshmanan, R. (2026, February 2). OpenClaw bug enables one-click remote code execution via malicious link. The Hacker News.

https://thehackernews.com/2026/02/openclaw-bug-enables-one-click-remote.html

Lakshmanan, R. (2026, February 8). OpenClaw integrates VirusTotal scanning to detect malicious ClawHub skills. The Hacker News.

https://thehackernews.com/2026/02/openclaw-integrates-virustotal-scanning.html

National Institute of Standards and Technology. (2023). Artificial intelligence risk management framework (AI RMF 1.0) (NIST AI 100-1).

https://nvlpubs.nist.gov/nistpubs/ai/NIST.AI.100-1.pdf

National Institute of Standards and Technology. (2024a). Artificial intelligence risk management framework: Generative artificial intelligence profile (NIST AI 600-1).

https://nvlpubs.nist.gov/nistpubs/ai/NIST.AI.600-1.pdf

National Institute of Standards and Technology. (2024b). The NIST cybersecurity framework (CSF) 2.0 (NIST CSWP 29).

https://nvlpubs.nist.gov/nistpubs/CSWP/NIST.CSWP.29.pdf

OpenClaw. (n.d.). Security. OpenClaw documentation.

https://docs.openclaw.ai/gateway/security

OWASP Foundation. (2024). OWASP top 10 for large language model applications 2025 (v2.0) [PDF].

https://owasp.org/www-project-top-10-for-large-language-model-applications/assets/PDF/OWASP-Top-10-for-LLMs-v2025.pdf

Ramesh, R. (2026, February 6). Moltbook gave everyone control of every AI agent. BankInfoSecurity.

https://www.bankinfosecurity.com/moltbook-gave-everyone-control-every-ai-agent-a-30710

Satter, R. (2026, February 2). ‘Moltbook’ social media site for AI agents had big security hole, cyber firm Wiz says. Reuters.

(Republished by Insurance Journal) https://www.insurancejournal.com/news/national/2026/02/02/856488.htm

What this post surfaces and what often gets overlooked - is that the attack surface is defined by the assumptions we bake into workflows and interfaces.

When prompts aren’t just text but the control plane, the security boundary becomes systemic, not just architectural.

Risk isn’t “AI behaviour”, but it’s how structures interpret language as authority.

This was so well thought out and comprehensive, thanks for sharing.